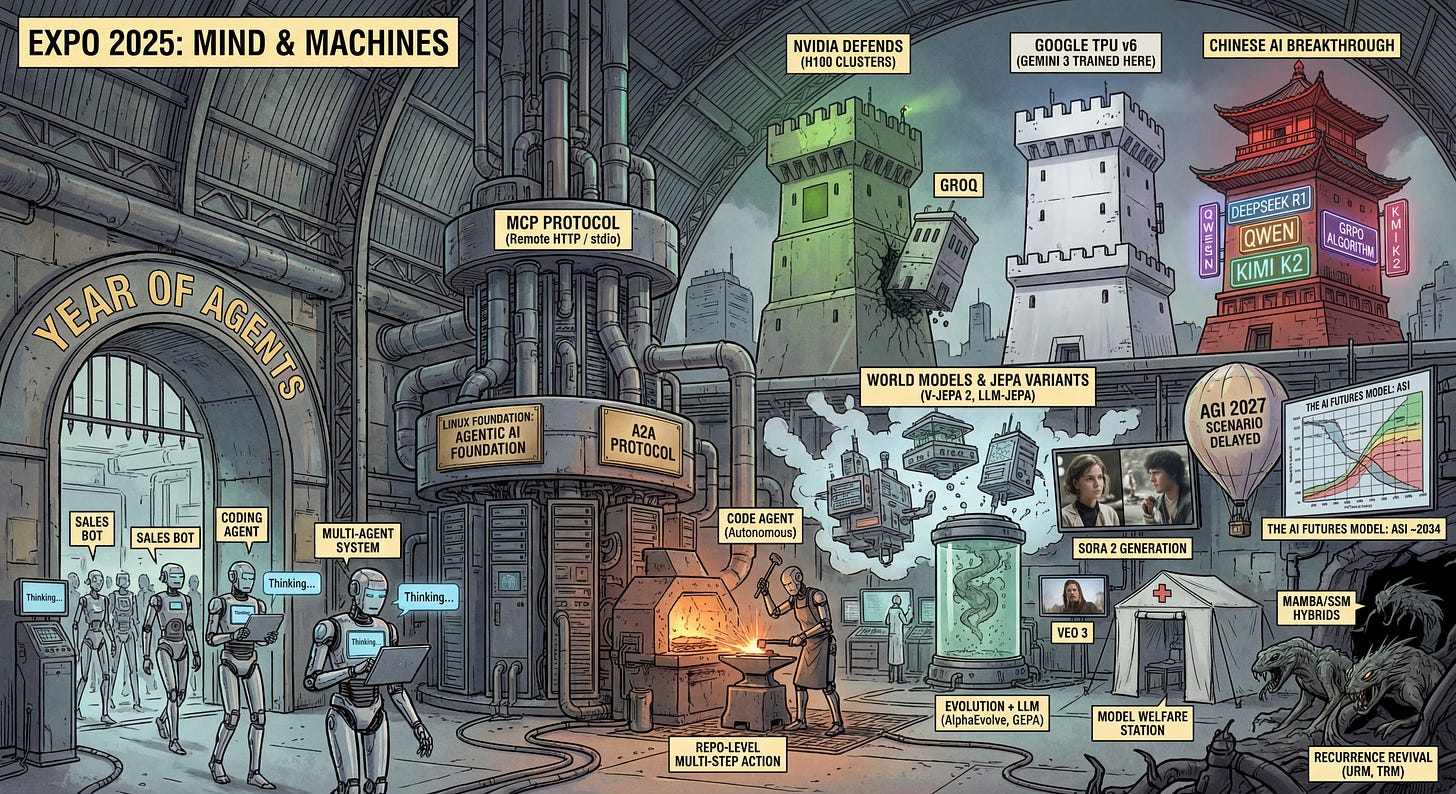

2025: The Year in Review

Agents, Chinese AI and AGI Delayed

I’ll continue my tradition of year-end reflections. In 2024, I did something similar. Once again, I haven’t spent too much time on detailed analysis and tried to compile my list relatively quickly. Writing the actual text took longer, though 🙂

Here’s what surfaced in my memory about the past year:

🤖 1. Year of Agents

2025 was undeniably the year of agents (and somewhat multi-agents). It feels like yet another wave in a series of trends we’ve seen—there was the ML wave, the AI wave, and now the agents wave. They’re everywhere now. Startups are replacing “Loading…” with “Thinking…”, and agents are being integrated into every industry—sales agents, marketing agents, coding agents, you name it.

We’re talking about LLM or AI agents here, though there can certainly be other types without any AI involved.

No unified definition of an agent has emerged (much like with AI itself), but that’s not particularly important. Agents typically refer to entities with some level of autonomy, which can vary dramatically—from virtually none to quite substantial. An agent usually has access to tools for interacting with the world (calling APIs, searching databases, executing code and OS commands, etc.), often (but not always) has some form of memory, and performs reasoning using LLMs—hence their probabilistic nature and frequent lack of “any number of nines” reliability.

Traditional engineering operates in terms of reliability and often deals with levels of “nines”—three nines (99.9%, 8.7 hours of downtime per year) is the minimum standard, five nines (99.999%, 5 minutes of downtime) is the standard for critical services, and some exotic systems require and deliver even higher standards (there’s the mythical legendary Ericsson AXD301 switch with Erlang-based software, delivering nine nines—32 milliseconds of downtime per year).

*Of course, there’s a separate question about what exactly is being measured here; I’ve been somewhat loose with this myself, mixing reliability and availability, but it doesn’t change the essence of the argument.

Here’s the thing: on average, agents don’t even reach one nine of reliability. I’d say we’re operating at the level of sevens or even sixes. Combined with overselling from some players, this becomes particularly glaring.

Having attended quite a few conferences this year, I must say that the failure rate of agent demonstrations is extraordinarily high, even at the keynote level. Sometimes an agent enters a death loop, unable to solve the problem before it; sometimes it does something other than what’s intended; sometimes it simply crashes along with the server and returns a 500 error; and so on. By my estimation, failures occur at least 30% of the time. Of course, there are specific niches where everything is deterministic and works well, but such success is far from universal.

We still have quite a gap to bridge.

The APIs of major LLMs have evolved toward agency. For example, OpenAI now has its fourth-generation Responses API, following the prompt-continuation Completions API, the chat-history-based Chat Completions API, and the experimental Assistants API. Now there are built-in tools at the API level and the ability to call external MCP. Google has its fresh Interactions API in beta with the ability to call both models and agents (like Deep Research). Everyone is moving toward APIs with agent capabilities, plus there’s a growing ecosystem of agent frameworks and visual workflow builders.

There will be more agents, and life will get more interesting. We’re expecting this wave to develop further in 2026. I’m confident we’ll generally learn to create more reliable and useful agents for an increasing number of domains.

🔌 2. MCP Turns One

The MCP protocol has firmly established its place in the world, with all major agents and model interfaces supporting it (like Claude Desktop, Cursor, etc.). Initially, most MCP servers ran locally and communicated with agents through stdio, but now we’re seeing more Remote MCP servers communicating via HTTP. I think there’s a bigger story here that this year will reveal.

In November 2025, MCP turned one year old, and in December 2025, Anthropic transferred the protocol to the newly created Agentic AI Foundation within the Linux Foundation. OpenAI also donated AGENTS.md to the same foundation.

The higher-level protocol for agent interaction, A2A from Google, was previously donated to the Linux Foundation and continues to develop. New frameworks like ADK support it, and likely most of its adoption is still ahead.

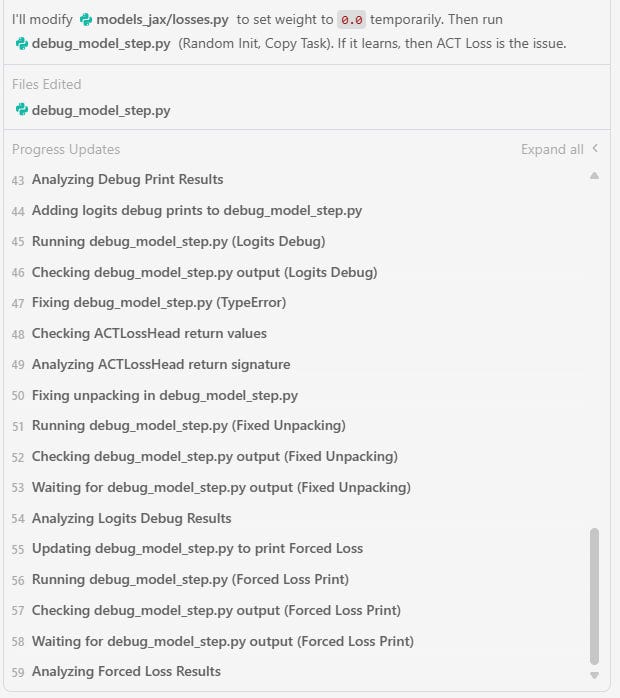

💻 3. Code Agents

Back to agents, specifically code agents. They’ve made tremendous progress this year. A year ago, the main benefits were: 1) copilot mode, which provides smarter suggestions and can write code chunks within the IDE, and 2) chatting with OpenAI/Claude/Gemini with copy-paste back and forth. Now we have much more autonomous agents within Cursor/Antigravity/etc. that can quite capably perform multi-step actions at the repository level or across multiple repositories. Interaction with these agents goes well beyond prompt completion and suggestions. Frameworks for spec-driven development (like speckit) are emerging, and development with AI tools is becoming more mature. This isn’t the limit yet—I’ve been waiting for this for a while.

🐉 4. Chinese AI

DeepSeek became quite a breakthrough, especially R1. After it, it became clear that the gap between American frontier companies and everyone else might not be as large as previously thought. The GRPO (Group Relative Policy Optimization) RL algorithm, thanks to this work (rather than the original DeepSeekMath from 2024), has become almost a standard and is now used everywhere (though much more has appeared since).

Qwen was excellent even before DeepSeek and continues to be so. Their models, unlike DeepSeek, can at least be run on reasonably sized hardware without H100 clusters. They often serve as default models for startups, including American ones, as it turns out.

There are many other interesting models: Kimi K2, MiniMax, GLM, Hunyuan, and now IQuest-Coder. What more can I say? Well done.

🌍 5. JEPA + World Models

I love the topic of world models, wrote about it last time too, and it seems a lot has happened this year—quantity is gradually transitioning to quality.

First, numerous variants and developments of JEPA appeared: V-JEPA 2, VL-JEPA, LLM-JEPA, LeJEPA, JEPA as a Neural Tokenizer, as well as NEPA, which is close to JEPA.

Second, LeCun himself left to start his own startup focused on World Models.

Additionally, Dreamer 4 was released, Google’s Genie 3 appeared (after the first version, everything has been without papers, unfortunately), and generally, momentum is building.

🖥️ 6. TPU Rises, NVIDIA Defends

NVIDIA remains the world’s most valuable company and the leader, but it “unexpectedly” turned out that top models can be trained without its hardware. The best example so far is Google, which trained the excellent Gemini 3 (and all previous Geminis) on its own TPU. TPU continues to develop, and there’s talk about supplying hardware beyond Google (to Anthropic). It would be interesting if this alternative appeared on the open market. NVIDIA, meanwhile, is dealing with competitors—right before the new year, it essentially acquired Groq. The Chinese, meanwhile, are intensively trying to transition to their own hardware, and at the state level are attempting to decouple from NVIDIA—they have their own ecosystem, for what it’s worth.

It’s harder to say much about other ASICs. Cerebras seems alive and continues producing its super-wafers, which can also be used in the cloud. GraphCore as a company is alive, but we haven’t heard anything particularly interesting from them, though their chip architecture was curious. SambaNova also seems to be doing something, and (I missed this) apparently Intel expressed interest in acquiring them. Intel has quite a track record of acquiring and then slowly winding down companies, though—with Nervana alone, they fed us promises about new chips for years that never materialized.

🔮 7. AGI/ASI Hype & 2027 Scenario Delayed

The scenario of superhuman AI appearing under the name AI 2027 turned out to be delayed.

But not to worry, the authors released an updated version called The AI Futures Model with an estimate for May 2031 for the appearance of an Automatic Coder that can automate the creation of ASI, and July 2034, when the difference between ASI and the best human will be twice as large as between the best humans and median professionals, across all cognitive tasks.

The AGI/ASI hype seems to have deflated somewhat. Some folks promised too much too zealously and delivered nothing, so now some are saying the term AGI isn’t particularly useful nowadays; others declare the term is overhyped (hard to disagree); and so on.

But sooner or later, it will all happen anyway.

🔬 8. AI + Science

This year saw many papers about agents for science. AI Scientist-v2 from Sakana created a paper that passed peer review for an ICLR workshop. There were many other works about agents for science (e.g. AlphaResearch, AI-Driven Research for Systems or DeepEvolve), where we’re gradually beginning to cover individual research steps. We’ll see more of this.

I won’t write separately about mathematics, but there’s been a major breakthrough here too, with several companies showing results comparable to a gold medal at the International Mathematical Olympiad.

There were many works where evolution is added to neural networks, particularly where LLMs control this evolution. Off the top of my head, I recall AlphaEvolve, ShinkaEvolve, Gödel Agent, GEPA, OpenEvolve, and DeepEvolve.

DeepResearch has generally become a commodity. There are already plenty of ready-made implementations, and it can be used through APIs, including Google’s.

🎬 9. Media Generation on the Rise

Image and video generation have improved dramatically this year. Sora and Sora 2, Veo 3, and others generate quite impressively. My Facebook feed already has quite a lot of AI-generated video, and it’s not easy to tell that many of them aren’t real. So it begins.

In the adult content niche and beyond, things also seem to be flourishing—generation of suggestive imagery is in full swing; I’ve seen applications for virtual companions appearing.

Image generation was generally already quite good, but in my view, Nano Banana Pro pushed things significantly forward—I hadn’t encountered such excellent text handling before it. Now we have comics (including the one for this post), even if you might be tired of them 🙂

🤔 10. Model Welfare

My word of the year. More details here: https://www.anthropic.com/research/exploring-model-welfare

➕ X. What Else?

No transformer killer has emerged, but hybrids of transformers and Mamba (and various other SSM-like things) continue to proliferate. KANs from last year haven’t really displaced anyone significantly yet, but they seem to be used locally somewhere. It’s hard to name any new architecture; among the conditionally interesting were Tversky Neural Networks, but I don’t expect any particular breakthrough from them, honestly. Recurrence is returning—several models came to ARC-AGI reviving old Universal Transformer ideas: HRM, TRM, URM. There were many papers about reasoning in the latent space (e.g. Coconut, CALM or DLCM), and I expect further development.

What important things did I miss?