Superconducting Supercomputers

A recent article in IEEE Spectrum discusses superconducting computers and a comprehensive stack currently being developed by the international organization Imec, headquartered in Belgium.

Amid discussions about trillion-dollar clusters with energy consumption scales around 20% of the total US energy production for a single cluster, and energy being one of the major bottlenecks of such undertakings, and even against the backdrop of earlier forecasts from 2015 about computing in general, which predicted that by 2040 the energy required for computing would exceed global production if typical mainstream computing systems continue to be used (see Figure A8), all these developments seem very relevant.

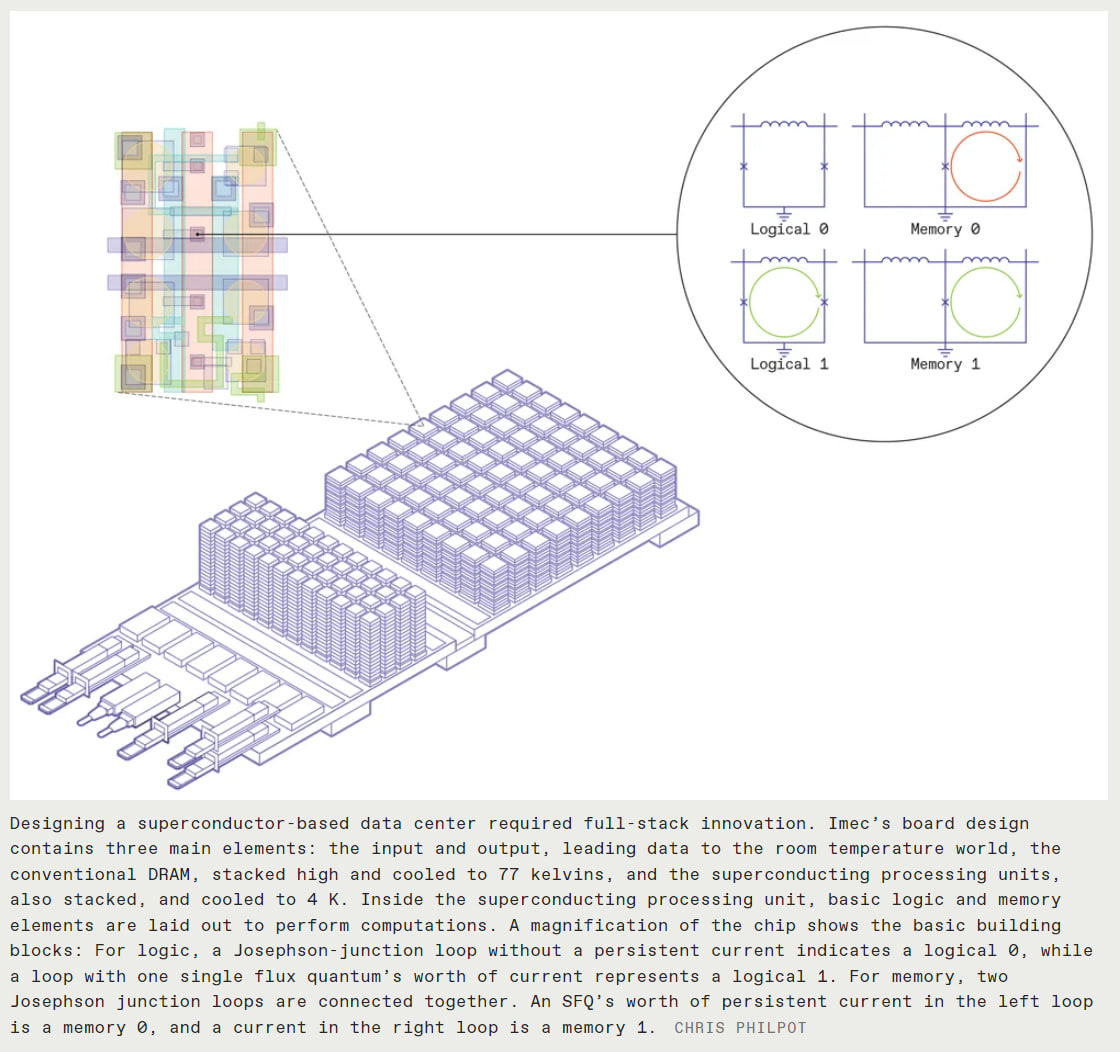

Imec is developing solutions at all levels of the stack, from materials for superconducting hardware, through new circuit design solutions for organizing logic and memory circuits, to architectural solutions at the level of integration with classic DRAM memory.

The new circuits are based on the use of the Josephson effect in devices called Josephson junctions (JJ). In these, two layers of superconductors are separated by a thin layer of dielectric, through which the current tunnels as long as this current does not exceed a critical value. When the critical current is exceeded, a voltage pulse occurs in the junction, initiating a current that will continue to flow through the superconducting circuit with JJ indefinitely. Logic elements (“current flows” - 1, “no current” - 0) and memory (two coupled circuits, if the current is in the left one - 1 is stored, if the current is in the right one and not in the left - 0) can be built on these circuits.

The proposed board by the authors, called the superconductor processing unit (SPU), contains superconducting logic circuits and static memory (SRAM) on JJ, cooled with liquid helium to 4K. Additionally, through a glass insulator on the board, there are classic non-superconducting CMOS DRAMs cooled to 77K, leading out to the room-temperature world via connectors.

A system with one hundred such boards, about the size of a shoebox (20x20x12 cm), has been simulated. This system can deliver 20 exaflops (10^18) in bf16 while consuming only 500 kilowatts. The top supercomputer Frontier offers something slightly over 1 exaflop, but that’s in fp64, not bf16 (as an estimate it could be x4 for bf16). Its energy consumption is a hundred times higher. The DGX H100 with 8 GPUs claims 32 petaflops in fp8, and accordingly, 16 petaflops in bf16, meaning 20 exaflops would require 10,000 H100 cards. Overall, it's impressive.

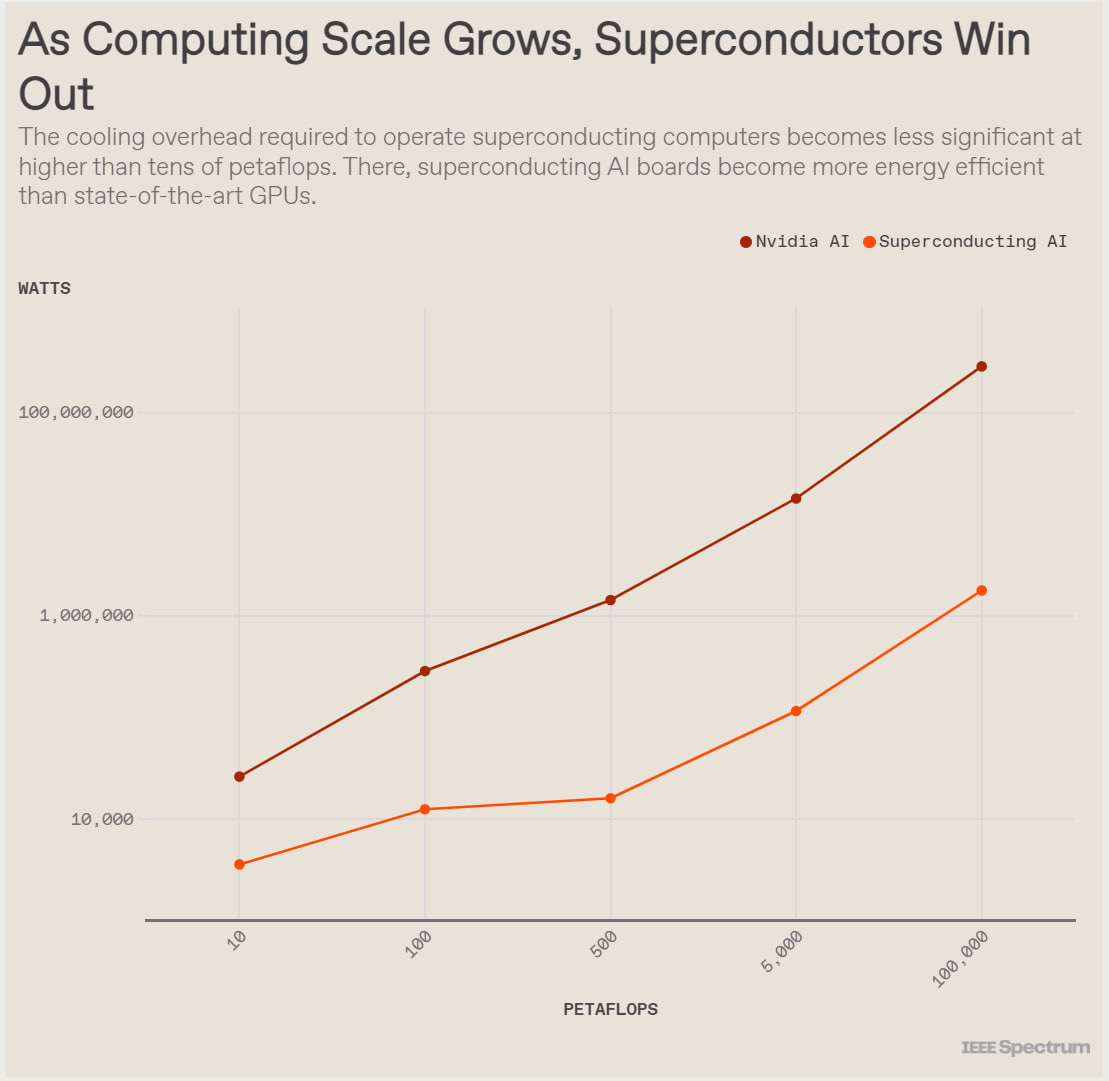

Yes, cooling requires energy (and expensive and rare helium), but starting from a certain scale (around ten petaflops), it fully pays off and the superconducting supercomputer outperforms the classic one based on GPUs.

Additional interesting bonuses may include easier integration with quantum computers, which require similar cooling, as well as with thermodynamic computers like those from Extropic, which also use JJs.

This is potentially a very exciting development. Perhaps we won’t need giga-datacenters the size of football fields with nuclear power plants nearby, but rather our own small superconducting supercomputer in the neighborhood? With its own local AI.